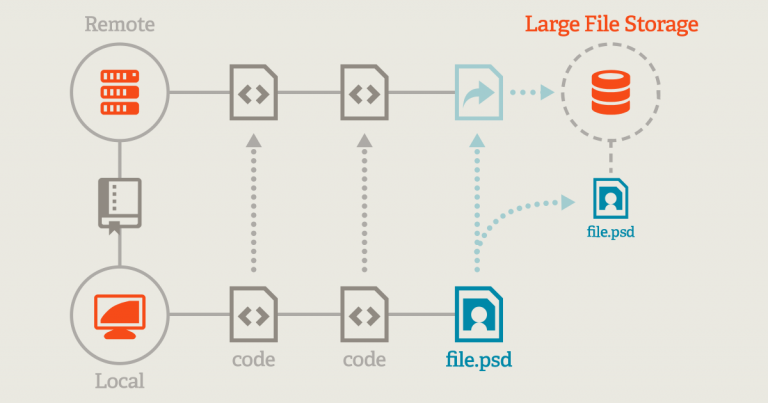

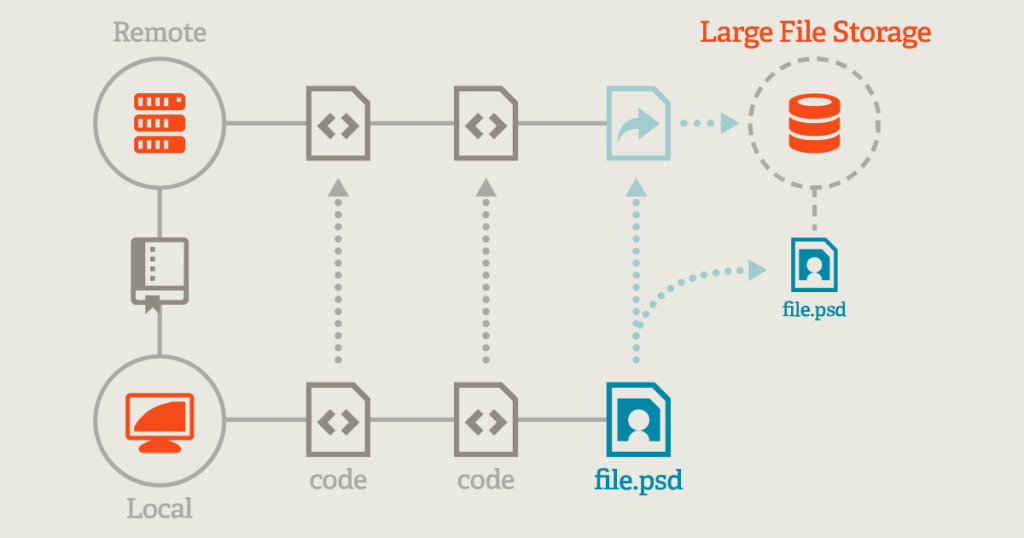

That's it! Now just use git commands like normal and annex will take care of binary files for you. Git annex config -set annex.largefiles 'mimeencoding=binary and largerthan=1b'

Here's how you set it up in your git repo (hopefully before you have committed any binary files to git, see my next blog post about fixing that): After some digging I found out that it does actually support a usage that looks a lot like git lfs, where you can configure it to automatically manage certain sets of files and then you just use git commands like normal. I did like that it doesn't require any kind of central server for me to set up, so I didn't reject it outright. The website for git annex immediately hits you with all the power and flexibility that it has, and I was turned off by that complexity. Sure, you can set up your own central git lfs server yourself, but that sounded suddenly not so nice and simple. Git lfs looks so nice and simple, except I'm not using github. Since everyone seems to know that you shouldn't keep large binary files in your git repo, I decide to see what the current solutions to that problem are.Īfter doing a little bit of searching, I narrowed things down to git lfs and git annex. It's just that some repos get a bit pathological around big binary files, and Git LFS can be a powerful band-aid in those cases.I'm developing the website for my business and I have a mix of code an images in my git repository. It satisfies the usecase ("get me the files from the server"). I also think vanilla git is a worthy competitor in this space. While it probably does not seem like a lot to remember (one extra command to type), in my experience adding one extra command to a workflow can impair productivity pretty signficantly - especially if people sometimes forget to run that command. They must also remember to run that same command after adding new files. Git LFS), with Git Annex new developers cloning the repo for the first time must also remember to invoke "git annex sync -content" after cloning. "git clone" alone is all they need.īased on my reading just now (mainly Git Annex vs. They can use "git lfs clone" to have that initial clone come down faster, but they don't have to. With Git LFS, new developers cloning a Git LFS-enabled repo for the first time don't need to know anything about it. I've only used Git LFS, but after studying the Git Annex Walkthrough, I think Git LFS is far superior, for a subtle but critical reason: But, git-fat doesn't have the support that annex or lfs has.Īnyway, if LFS just the leader because it is supported by github (and created?)? Or, is it technically better?įinally, on a separate note, do you think git will ever adopt any one method as blessed and include it in its own implementation? (hence removing the issue of special remotes). And, who ever said git didn't have a learning curve?Īctually, to be honest, my favorite solution so far has been git-fat since (a) my primary programming competency is python, (b) I have access to rsync on my platforms (no windows) and (c) it is pretty light-weight. It does seem like git-annex may be slightly more complicated, though it doesn't seem too bad. git annex lets you directly use many types of remotes. While I do use gitlab for work and github for some personal projects, I also just use SSH-based remotes on shared servers.

I have to say, I am a bit surprised given that it has, at least to me, one major flaw: you need a special server to run it. And, it really seems that the latter is "winning".

There is no pressing urgency in my evaluation since I have a working not-entirely-git solution, but I wanted to know what else is out there.įrom my (admittedly light) research, it seems like the two major players are git annex and git LFS. I am evaluating git solutions for large files.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed